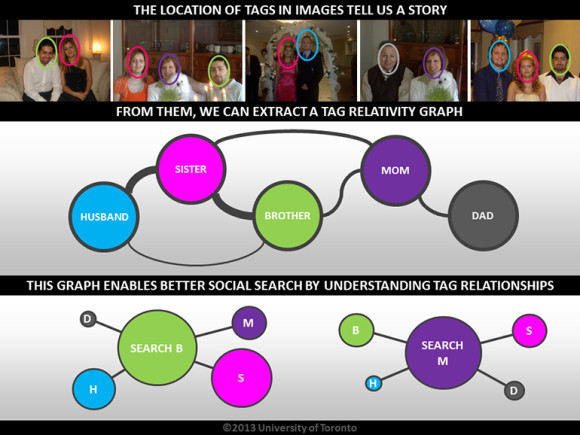

The search tool uses tag locations to quantify relationships between individuals, even those not tagged in any given photo.

Imagine you and your mother are pictured together, building a sandcastle at the beach. You’re both tagged in the photo quite close together. In the next photo, you and your father are eating watermelon. You’re both tagged.

Because of your close “tagging” relationship with both your mother in the first picture and your father in the second, the algorithm can determine that a relationship exists between those two and quantify how strong it may be.

In a third photo, you fly a kite with both parents, but only your mother is tagged. Given the strength of your tagging relationship with your parents, when you search for photos of your father the algorithm can return the untagged photo because of the very high likelihood he’s pictured.

“Two things are happening: we understand relationships, and we can search images better,” says Parham Aarabi, a professor in the Department of Electrical and Computer Engineering at the University of Toronto, who helped develop the algorithm.

The tool, called relational social image search, achieves high reliability without using computationally intensive object- or facial-recognition software.

“If you want to search a trillion photos, normally that takes at least a trillion operations. It’s based on the number of photos you have,” says Aarabi. “Facebook has almost half a trillion photos, but a billion users—it’s almost a 500 order of magnitude difference.

“Our algorithm is simply based on the number of tags, not on the number of photos, which makes it more efficient to search than standard approaches.”

Currently the algorithm’s interface is primarily for research, but Aarabi aims to see it incorporated on the back-end of large image databases or social networks.

“I envision the interface would be exactly like you use Facebook search—for users, nothing would change. They would just get better results,” says Aarabi.

The National Science and Engineering Research Council of Canada supported the project. It will be presented at the IEEE International Symposium on Multimedia Dec. 10, 2013. This month, the United States Patent and Trademark Office will issue a patent on this technology.