- A way to detect deepfakes images of people has emerged from the world of astronomy. Researchers used a method of looking at galaxies to detect deepfakes. They used something called the Gini coefficient, which measures how the light in an image of a galaxy is distributed among its pixels.

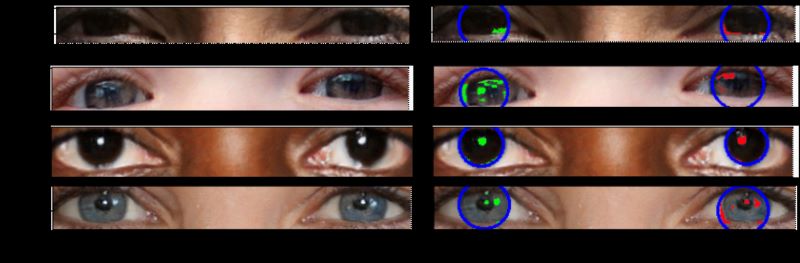

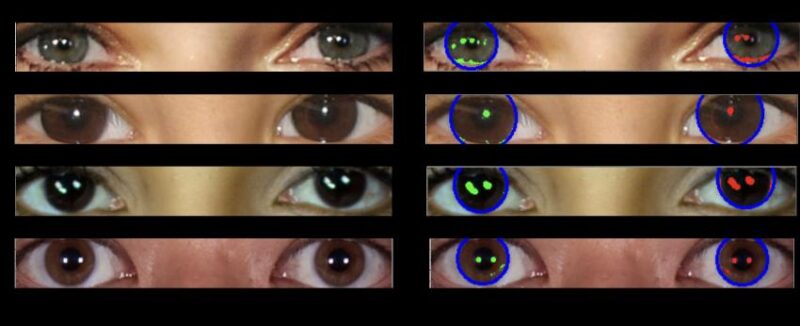

- The method can be used to examine the eyes of people in real and deepfake images (AI-generated images). If the reflections don’t match, the image is most likely fake.

- It’s not perfect at detecting fake images. But, the researchers said, “this method provides us with a basis, a plan of attack, in the arms race to detect deepfakes.”

The Royal Astronomical Society published this original article on July 17, 2024. Edits by EarthSky.

How to spot an AI-generated image with science

In an era when the creation of artificial intelligence (AI) images is at the fingertips of the masses, the ability to detect fake pictures – particularly deepfakes of people – is becoming increasingly important.

So what if you could tell just by looking into someone’s eyes?

That’s the compelling finding of new research shared at the Royal Astronomical Society’s National Astronomy Meeting in Hull, U.K., which suggests that AI-generated fakes can be spotted by analyzing human eyes in the same way that astronomers study pictures of galaxies.

The crux of the work, by University of Hull M.Sc. student Adejumoke Owolabi, is all about the reflection in a person’s eyeballs.

If the reflections match, the image is likely to be that of a real human. If they don’t, they’re probably deepfakes.

Kevin Pimbblet is professor of astrophysics and director of the Centre of Excellence for Data Science, Artificial Intelligence and Modelling at the University of Hull. Pimbblet said:

The reflections in the eyeballs are consistent for the real person, but incorrect (from a physics point of view) for the fake person.

Like stars in their eyes

Researchers analyzed reflections of light on the eyeballs of people in real and AI-generated images. They then employed methods typically used in astronomy to quantify the reflections and checked for consistency between left and right eyeball reflections.

Fake images often lack consistency in the reflections between each eye, whereas real images generally show the same reflections in both eyes. Pimbblet said:

To measure the shapes of galaxies, we analyze whether they’re centrally compact, whether they’re symmetric, and how smooth they are. We analyze the light distribution.

We detect the reflections in an automated way and run their morphological features through the CAS [concentration, asymmetry, smoothness] and Gini indices to compare similarity between left and right eyeballs.

The findings show that deepfakes have some differences between the pair.

Distribution of light

The Gini coefficient is normally used to measure how the light in an image of a galaxy is distributed among its pixels. Astronomers make this measurement by ordering the pixels that make up the image of a galaxy in ascending order by flux. Then they compare the result to what would be expected from a perfectly even flux distribution.

A Gini value of 0 is a galaxy in which the light is evenly distributed across all of the image’s pixels. A Gini value of 1 is a galaxy with all light concentrated in a single pixel.

The team also tested CAS parameters, a tool originally developed by astronomers to measure the light distribution of galaxies to determine their morphology, but found it was not a successful predictor of fake eyes. Pimbblet said:

It’s important to note that this is not a silver bullet for detecting fake images.

There are false positives and false negatives; it’s not going to get everything. But this method provides us with a basis, a plan of attack, in the arms race to detect deepfakes.

Bottom line: A method astronomers use to measure light from galaxies can also be used to tell whether a photo of someone is real or a deepfake AI-generated image.

Via Royal Astronomical Society

Read more: Is AI to blame for our failure to find alien civilizations?